Nobody Knows Who Owns What. Here Is What I Am Building.

Every actor in the AI media market is making the right decision for themselves—and the result is paralysis. I am building the standard that breaks the loop.

[Author’s Note: I be away for some business travel and some time off for the second half of the week. The plan to publish one more essay this week. ]

Streaming marked a fundamental shift in the value of movies and TV shows. A monthly fee made content available at a fraction of the cost of cable or DVDs. Amazon Prime equated watching Hollywood movies to free deliveries of paper towels. The competitive advantage of owning a giant library eroded. User data—an understanding of what the consumer needed to watch in the moment—became more valuable than the content itself.

The promised solution—an owned-and-operated streaming service—is barely profitable at 5% net margins for legacy media companies. A service requires increasingly sophisticated technology to compete that their executives lack the skillsets and experience to identify and integrate.

Generative AI threatens to finish what streaming started. Streaming killed pay windows and compressed the value of expensive productions to one title among thousands in a monthly subscription.

AI is compressing the value of IP itself. A comedy writer Heather Ann Campbell explained it plainly on Bluesky: “All of these people who have access to the latest AI visualisation engines like Seedance they’re being given total control to create anything they can imagine - and they’re turning out fanfiction.”

A Seedance clip of Brad Pitt fighting Tom Cruise resulted in Hollywood studios and the Motion Picture Association (MPA) sending cease-and-desist letters to ByteDance. Disney partnered with OpenAI and sent Google cease-and-desist letters. The lesson is the same in both cases: The technology stack of an IP owner must now be sophisticated enough to deliver a profitable service consumers need and to regulate how AI training models access their IP libraries. Open-source AI tools are proliferating—most beyond U.S. and EU enforcement reach—and are replicating Hollywood IP with zero consequences.

At the same time, a new generation of AI creators is already building original IP. But owning and licensing that work requires human authorship that any use of generative AI tools may not satisfy, with the current exceptions of motion capture and 3-D rendering. Licensing agreements restrict behavior on specific platforms. They do nothing about the billions of users in jurisdictions with weaker IP regulations where enforcement is impractical.

The best AI creators are earning well by producing branded and agency content—think early radio and TV sponsorships—but for their own content, there are few settled answers for basic questions: Do I own this? Can I license it? What happens if someone claims the training data was stolen?

The risks are real—training data provenance, likeness usage, conflicting tool licenses—but lawyers are limited to analogies from traditional media that are partially applicable, at best. On the other side, institutional buyers such as streamers and studios operate under intense reputational and litigation risk. Internal Business Affairs teams currently adopt blanket prohibitions that kill promising AI-native deals without systematically assessing the actual exposure. Insurers cannot price AI-heavy productions, so they exclude them entirely.

No coverage means no closed deals. Investors cannot model the long-term defensibility of AI-native IP, so capital cannot deploy where paperwork does not exist. The Supreme Court recently declined to hear the only generative AI copyright case on its docket. Other cases are currently working their way through the appeals system.

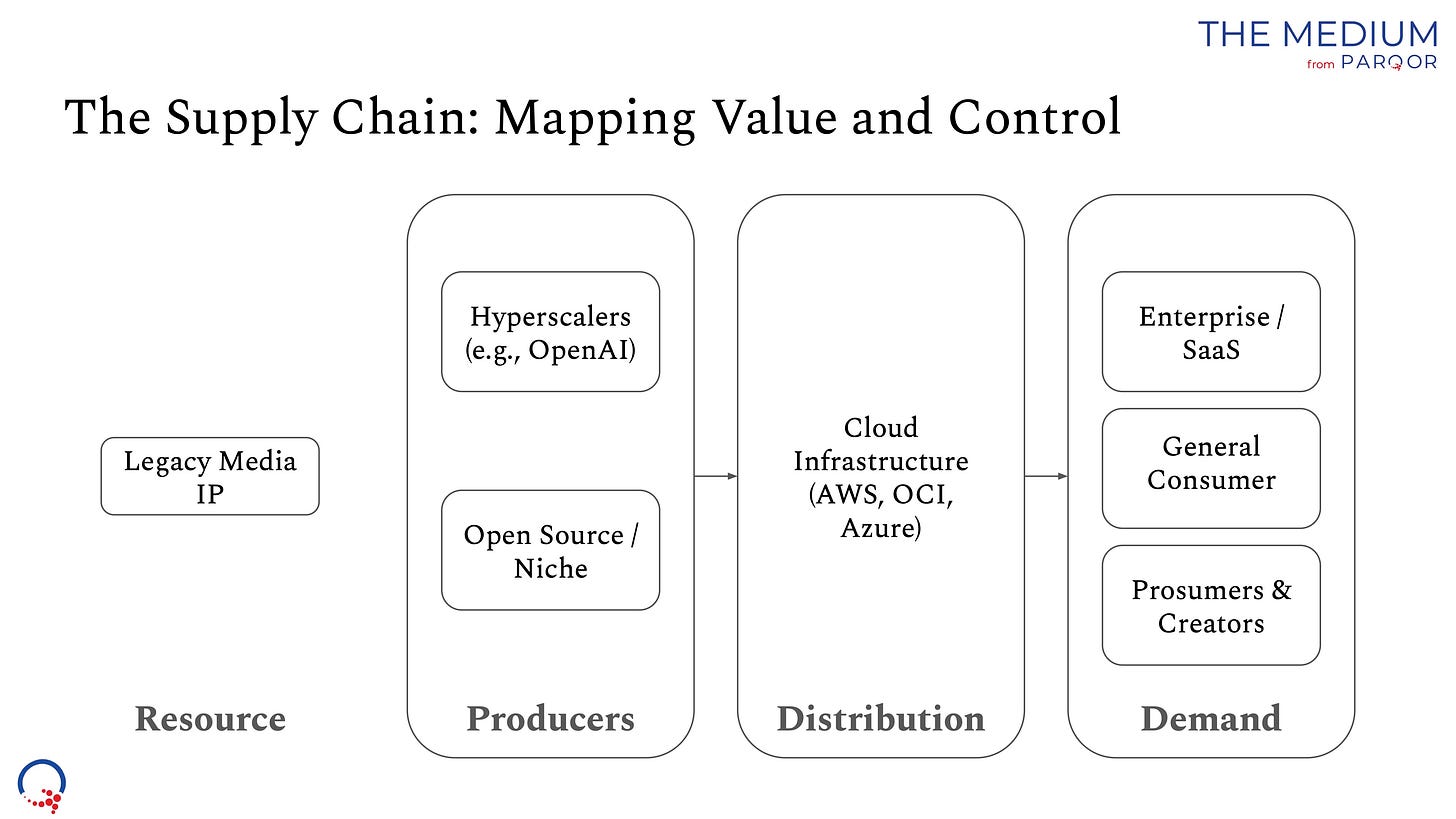

The problem extends beyond Hollywood. Over 90% of U.S. ad agencies are using or experimenting with generative AI, but legal and IP concerns remain among the main barriers to scaled deployment. Eighty percent of brands have concerns about agency use of generative AI and are revisiting contracts, governance and guardrails. More than half of surveyed audiences say they are uncomfortable with AI-generated brand content. The coordination failure runs the entire value chain from the person who makes the content to the person who watches it.

Nobody—excluding pirates—is acting in bad faith. Each actor is making the correct decision for their own position. A creator who cannot prove ownership is right to take the brand deal. A studio that cannot evaluate risk is right to reject the submission. An insurer that cannot model exposure is right to exclude the policy. An investor who cannot model defensibility is right to pass on the deal. A court that sees no urgency is right to wait.

Economists call this a market coordination failure. It is not a disagreement. It is a system where every participant needs someone else to move first and no one has the incentive to be that someone. A creator cannot invest in building a franchise until they know the IP is defensible. But the IP is not defensible until courts or regulators establish standards. Courts will not establish standards until better cases arrive. Better cases will not arrive until creators invest in building franchises. The loop has no starting point.

This is why analyzing any single element in isolation produces the wrong answer. A studio executive who studies only copyright law will miss that insurers are excluding AI productions entirely. An investor who studies only deal structures will miss that the underlying IP may not survive a platform terms-of-service change. An insurer who studies only loss exposure will miss that creators are generating the signal data needed to price the risk. Every constituency holds a piece of the picture. No constituency can see the whole board.

The sum of those rational decisions is paralysis. Billions of dollars in potential value sit trapped behind uncertainty rather than actual legal impossibility. That is not a content problem or a technology problem. It is a coordination failure.